NGINX Rift: Achieving NGINX Remote Code Execution via an 18-Year-Old Vulnerability

TLDR: We used depthfirst’s system to analyze the NGINX source code, and it autonomously discovered 4 remote memory corruption issues, including a critical heap buffer overflow introduced in 2008. We further investigated the exploitability of the issues, and developed a working proof of concept demonstrating RCE with ASLR off. If you use rewrite and set directives in your NGINX configuration, you’re at risk.

In mid-April, I was chatting with a colleague about the most vulnerable spot in our infrastructure. Since most of our services live entirely inside a private network, our app platform is the only exposed surface. He joked that achieving remote code execution on our web service would mean hacking into depthfirst completely. Hacking the web service itself is not my usual focus. However, the idea of hacking the underlying web server intrigued me, which directed my attention to NGINX.

NGINX is the most popular web server today, powering nearly a third of all websites globally. Its high performance architecture makes it the undisputed leader for handling massive volumes of web traffic. From serving static content to acting as an essential reverse proxy, it sits at the critical edge of the modern internet. A single vulnerability in this core infrastructure can therefore expose countless backend systems to severe risks.

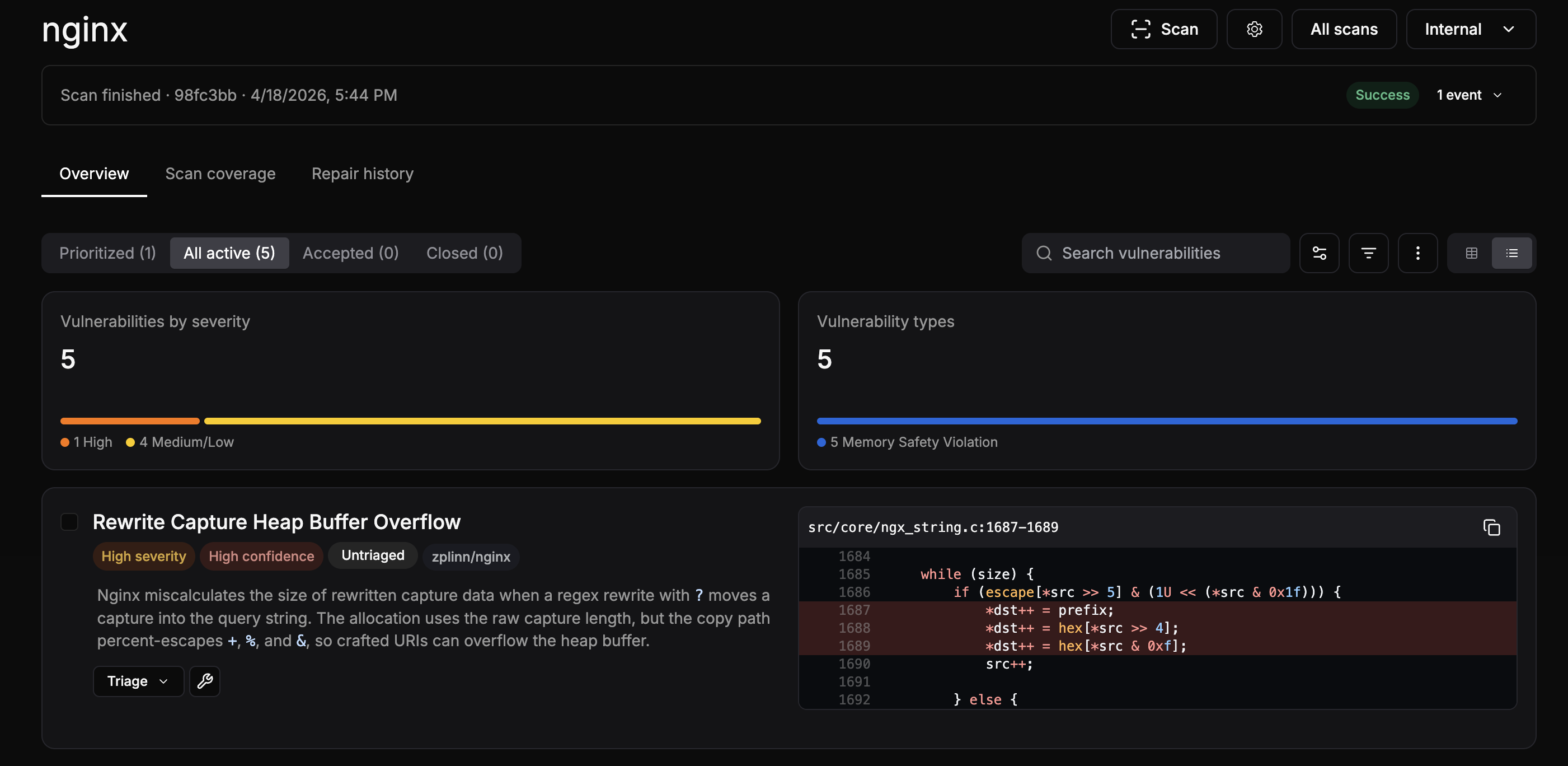

Internally, we have an autonomous system that specializes in analyzing low level software. Analyzing NGINX simply required a single click to onboard the repository and trigger the analysis. After six hours of scanning, the system identified 5 security issues including a high severity finding, which is a heap overflow issue when handling NGINX rewrite directive.

After briefly reviewing the findings, we reported the issues to NGINX via github security advisory. For each finding, we provided a detailed vulnerability description, root cause analysis, and a proof of concept generated directly by our system. 4 of the findings were confirmed by NGINX:

- CVE-2026-42945 (Critical, CVSS 9.2): A heap buffer overflow issue in

ngx_http_rewrite_module, an unpropagatedis_argsflag during arewriteandsetsequence causes an undersized buffer allocation. The copy phase then writes attacker-controlled escaped URI data past the heap boundary, leading to RCE. - CVE-2026-42946 (High, CVSS 8.3): An excessive memory allocation issue in

ngx_http_scgi_moduleandngx_http_uwsgi_module, a state mismatch after an incomplete upstream status line read causes a cross-buffer pointer subtraction. This produces a ~1 TB key length, crashing the worker process. - CVE-2026-40701 (Medium, CVSS 6.3): A use after free issue in

ngx_http_ssl_module, if a TLS connection closes before asynchronous OCSP DNS resolution completes, the context pool is destroyed without cancelling the resolver request. The DNS timer later dereferences the freed pointer. - CVE-2026-42934 (Medium, CVSS 6.3): An out-of-bounds read issue in

ngx_http_charset_module, an off-by-one error when handling incomplete UTF-8 sequences across proxy buffer boundaries corrupts the length state. This computes a negative source offset, reading 2 bytes before the allocated upstream buffer.

Among the 4 confirmed issues, CVE-2026-42945 is the most critical one. It is a heap buffer overflow issue that was introduced in 2008, impacting NGINX versions from 0.6.27 to 1.30.0. Given the high severity and the fact that it has been around for 18 years, we decided to investigate it in depth.

CVE-2026-42945

This vulnerability requires rewrite and set directives to trigger, but what are these directives?

Imagine you are migrating a legacy API to a new system. You need to seamlessly route incoming requests to the new endpoints. The rewrite directive allows you to modify the request path on the fly. However, your backend application might still need to know the original requested path. This is exactly where the set directive proves essential. It lets you capture and store the original path in a custom variable before the rewrite occurs. Together, these two directives are common building blocks in API gateway configurations.

The rewrite directive changes the request URI based on regular expressions. When a request matches the specified pattern, NGINX replaces the URI with a new string. For example, rewrite ^/api/(.*)$ /v2/api/$1 takes the matched part in the parentheses (a capture group) and appends it to the new path using the $1 variable. If the replacement string contains a question mark, NGINX treats the rest of the string as a query string and appends the original request arguments to it.

The set directive is used to assign a value to a custom variable. This is incredibly useful in practice for temporarily storing parts of the original request, dynamically routing endpoints, or maintaining state throughout the request lifecycle before subsequent rewrites alter the URI. Similar to the rewrite directive, it can also reference capture groups from the most recently executed regular expression. For instance, a configuration might use set $original_path $1 to save the value of the first capture group into a variable named original_path. This ensures that backend applications or access logs still have access to the original requested endpoint even after the URI has been completely rewritten.

Under the hood, NGINX optimizes these operations using its script engine. When parsing the configuration, the script engine compiles these directives into a sequence of operations. During runtime, it executes them in a two pass process. The first pass calculates the total length of the final string to allocate the exact amount of memory needed from its memory pool. The second pass then executes copy operations to write the actual data into the newly allocated buffer. This design avoids multiple small memory allocations, but it requires the length calculated in the first pass perfectly matches the amount of data written in the second pass. If the engine state changes between these two passes, a memory corruption vulnerability can occur.

The Root Cause

As mentioned, the script engine uses a two pass process. First, it calculates the required memory length. Then, it copies the actual data. A heap buffer overflow occurs here because the internal engine state changes between these two passes.

Specifically, the vulnerability resides in src/http/ngx_http_script.c. The flaw is triggered when a rewrite directive contains a question mark in its replacement string. This causes the ngx_http_script_start_args_code function to permanently set the e->is_args = 1 flag on the script engine:

void

ngx_http_script_start_args_code(ngx_http_script_engine_t *e)

{

ngx_log_debug0(NGX_LOG_DEBUG_HTTP, e->request->connection->log, 0,

"http script args");

e->is_args = 1;

e->args = e->pos;

e->ip += sizeof(uintptr_t);

}This flag is never reset between script code evaluations. When a subsequent set directive references a regex capture group, it triggers the ngx_http_script_complex_value_code function. This is where the two pass design breaks down. During the length calculation pass, this function uses a fresh, completely zeroed out sub engine called le.

void

ngx_http_script_complex_value_code(ngx_http_script_engine_t *e)

{

size_t len;

ngx_http_script_engine_t le;

ngx_http_script_len_code_pt lcode;

ngx_http_script_complex_value_code_t *code;

code = (ngx_http_script_complex_value_code_t *) e->ip;

e->ip += sizeof(ngx_http_script_complex_value_code_t);

ngx_log_debug0(NGX_LOG_DEBUG_HTTP, e->request->connection->log, 0,

"http script complex value");

ngx_memzero(&le, sizeof(ngx_http_script_engine_t)); // fully zeroed sub engine

le.ip = code->lengths->elts;Because it is initialized with zeros, le.is_args is zero. The length calculation function, ngx_http_script_copy_capture_len_code, checks the following condition to decide if escaping is needed:

size_t

ngx_http_script_copy_capture_len_code(ngx_http_script_engine_t *e)

{

...

if ((e->is_args || e->quote)

&& (e->request->quoted_uri || e->request->plus_in_uri))

{

p = r->captures_data;

return cap[n + 1] - cap[n]

+ 2 * ngx_escape_uri(NULL, &p[cap[n]], cap[n + 1] - cap[n],

NGX_ESCAPE_ARGS);

} else {

return cap[n + 1] - cap[n];

}

...Because le.is_args is zero, this condition evaluates to false. It falls through to the else branch and simply returns the raw, unescaped capture length. However, during the second copy pass, the copy function ngx_http_script_copy_capture_code runs on the main engine where e->is_args is still set to 1. The exact same condition now evaluates to true, entering a different logic branch:

void

ngx_http_script_copy_capture_code(ngx_http_script_engine_t *e)

{

...

if ((e->is_args || e->quote)

&& (e->request->quoted_uri || e->request->plus_in_uri))

{

...

// OVERFLOW HAPPENS HERE

// The destination buffer `pos` was allocated with `raw_size`,

// but `ngx_escape_uri` expands the characters and writes

// the much larger `raw_size + 2 * N` bytes directly into it!

e->pos = (u_char *) ngx_escape_uri(pos, &p[cap[n]],

cap[n + 1] - cap[n],

NGX_ESCAPE_ARGS);

} else {

e->pos = ngx_copy(pos, &p[cap[n]], cap[n + 1] - cap[n]);

}It calls ngx_escape_uri with the NGX_ESCAPE_ARGS flag. This function expands every escapable character, like a plus sign or ampersand, from one byte to three bytes.

Consider the following trigger configuration:

location ~ ^/api/(.*)$ {

rewrite ^/api/(.*)$ /internal?migrated=true;

set $original_endpoint $1;

}Because of the unexpected state change, the copy function writes raw_size + 2 * N bytes into a buffer allocated for only raw_size bytes, where N is the number of escapable characters. The escaped output written during the second pass becomes significantly larger. This mismatch causes the data to completely overflow the allocated pool boundary.

Exploitation

Luckily, NGINX uses a multi process architecture where worker processes fork from a single master process. Because of this design, the memory space is duplicated exactly for every child worker. This means the heap layout remains entirely deterministic across different workers. If our exploit fails and crashes a worker, the master process simply spawns a new one with the exact same memory layout. This allows us to safely try multiple times until we succeed without worrying about the worker crashing and changing the memory layout. Theoretically, we could leverage this design to leak ASLR by progressively overwriting pointers byte by byte. In this post, we discuss the exploitation technique assuming ASLR has already been bypassed.

The vulnerability gives us a highly controllable heap buffer overflow. By padding the request URI with plus signs, we can force the escaping function to expand each byte into three bytes, overflowing the allocated chunk. The size of the overflow is completely under our control based on the number of escapable characters we provide. However, we face a major restriction. The bytes we use to overwrite adjacent memory are passed through the URI parser and the escaping function. This means we cannot simply inject arbitrary bytes. Our payload is strictly limited to URI safe characters. So, without null bytes, how can we craft a pointer?

Overwriting ngx_pool

To turn this overflow into code execution, we need a reliable target. NGINX uses memory pools to manage allocations per connection and per request. The memory pool is defined by the ngx_pool_t structure, which contains essential metadata for managing the allocator state.

struct ngx_pool_s {

ngx_pool_data_t d;

size_t max;

ngx_pool_t *current;

ngx_chain_t *chain;

ngx_pool_large_t *large;

ngx_pool_cleanup_t *cleanup;

ngx_log_t *log;

};Our ultimate goal is to overwrite the cleanup pointer at offset 64. This field points to a linked list of ngx_pool_cleanup_t structures, which hold function pointers (handler) and their arguments (data) to be executed when the pool is destroyed:

typedef void (*ngx_pool_cleanup_pt)(void *data);

struct ngx_pool_cleanup_s {

ngx_pool_cleanup_pt handler;

void *data;

ngx_pool_cleanup_t *next;

};However, because our heap overflow is contiguous, we face a major challenge. To reach the cleanup pointer, we must first overwrite all the preceding fields in the pool structure: d, max, current, chain, and large.

Filling these metadata fields with our URI safe padding bytes (like %2B) completely corrupts the internal state of the pool allocator. If the victim connection attempts to allocate more memory, read from the network, or process further data, NGINX will inevitably dereference one of these corrupted pointers and crash prematurely. This premature crash would completely prevent our exploit from succeeding.

To bypass this, we must ensure the pool is destroyed immediately after the corruption occurs, before any of the corrupted allocation fields are used. We achieve this perfect timing using a cross request heap feng shui technique. The attacker controls the heap layout and the exact lifecycle of the pools through connection ordering:

- Open an initial connection and send partial headers. NGINX allocates a request pool for this connection.

- Open a second victim connection, which allocates a victim pool exactly adjacent to the first pool.

- Complete the initial headers, triggering the rewrite overflow directly out of the first pool and into the adjacent victim pool header.

- Immediately close the victim connection, which will call

ngx_destroy_poolto destroy the victim pool.

This precision allows us to reliably corrupt the victim pool header, without crashing the worker process. This works because when destroying the pool, NGINX iterates the cleanup linked list but not touching any of the corrupted fields in the pool structure. After this, we obtain the primitive of dereferencing arbitrary ngx_pool_cleanup_s.

Spraying Fake ngx_pool_cleanup_s

As we can only write URI safe characters, we need a way to inject arbitrary binary pointers (which often contain null bytes) into the victim pool header. We achieve this by spraying the heap with POST request bodies. Unlike HTTP headers or request URIs which are strictly parsed, POST bodies are treated as raw data streams and can contain arbitrary binary payloads, including null bytes. We construct a spray payload containing a fake cleanup structure pointing to the libc system function, followed by a user-supplied command string.

for (c = pool->cleanup; c; c = c->next) {

if (c->handler) {

c->handler(c->data);

}

}Because the heap layout is highly predictable across workers, our fake structures sprayed via POST will land at fixed offsets. We could bruteforce this address where our fake structures land, and explicitly filters them to find an address that consists entirely of URI-safe bytes. This guarantees the address used to overwrite the cleanup pointer will survive the escaping function intact. Note that we can’t overflow with null bytes, we can just overwrite the lower address of the cleanup pointer, making it referencing the faked ngx_pool_cleanup_s object we sprayed. Finally, we close the victim socket from the client side, triggering ngx_destroy_pool in NGINX worker process, which iterates pool->cleanup linked list to execute all registered handlers, and executing our injected command with system function.

The source code of the proof of concept is available on our GitHub repository.

Affected Versions

- NGINX Open Source 0.6.27 through 1.30.0

- NGINX Plus R32 through R36.

- NGINX Instance Manager 2.16.0 through 2.21.1.

- F5 WAF for NGINX 5.9.0 through 5.12.1.

- NGINX App Protect WAF 4.9.0 through 4.16.0 and 5.1.0 through 5.8.0.

- F5 DoS for NGINX 4.8.0.

- NGINX App Protect DoS 4.3.0 through 4.7.0.

- NGINX Gateway Fabric 1.3.0 through 1.6.2 and 2.0.0 through 2.5.1.

- NGINX Ingress Controller 3.5.0 through 3.7.2, 4.0.0 through 4.0.1, and 5.0.0 through 5.4.1.

Timeline

- 4/18/2026: We used depthfirst’s internal system to analyze the NGINX source code, and it reported 5 memory corruption issues.

- 4/21/2026: We reported all 5 issues to NGINX via a GitHub security advisory.

- 4/24/2026: NGINX confirmed 4 of the reported issues.

- 4/28/2026: We informed NGINX that we had developed a working PoC demonstrating RCE.

- 5/5/2026: We shared our RCE PoC with NGINX and attached a demo video.

- 5/13/2026: F5 released the NGINX security advisory.

- 5/13/2026: This blog post was published.